Most product teams got faster when they adopted AI. Almost none got smarter.

DORA measured 5,000 engineering teams. The results: AI adoption at 95%. Individual productivity up 21%. Organizational delivery unchanged. Code review times up 91%. Bug rates rising.

The speed is real. The outcomes are not following. This is the innovation velocity problem. And the reason most product leaders are missing it is that they are measuring the wrong thing.

Speed Without Direction Is High-Speed Waste

Velocity is a physics term. It has two components: speed and direction. Most teams measure speed. Almost none measure direction.

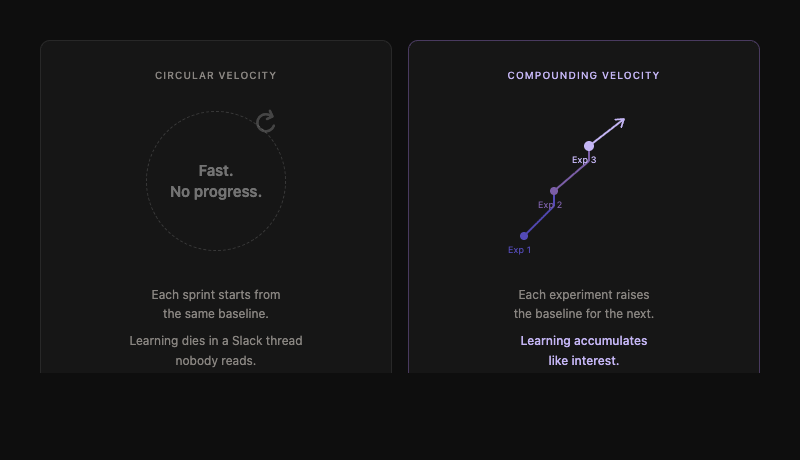

There is a specific failure mode worth naming. Call it Circular Velocity. Moving fast, generating output, running experiments, shipping features. But moving in circles. The learning from one experiment never makes it into the next. The organization is busy. It is not compounding.

When a team can generate ten times the output, the question is no longer how fast they are moving. It is whether they are moving toward the right thing. Speed on the wrong problem is not innovation. It is high-speed waste.

AI accelerated this dynamic. Teams using AI achieve 55% faster coding speeds. Prototyping timelines compress from months into weeks. Every metric that measures motion has improved. But faster execution on the wrong problem is not a velocity win. It is a compounding liability.

DORA's research makes the mechanism visible. AI tool adoption did not translate into organizational delivery improvement. It translated into individual output improvement inside systems that were never redesigned to absorb that output. The individual gets faster. The system stays slow. The gap between the two is where the investment disappears.

What Innovation Velocity Actually Measures

Innovation velocity is not a speed metric. It is a compounding metric.

The question is not how many features did we ship this quarter. It is how much did we learn per experiment, and how quickly did we apply that learning to the next one.

Three things determine whether innovation velocity is actually compounding.

Experiment quality over volume. Most teams optimize for running more experiments. The winners optimize for running better ones. An experiment without a clear hypothesis and pre-defined success criteria is not an experiment. It is a feature with a story around it. Its learning value is zero.

Decision speed at the right level. The bottleneck in 2026 is rarely the execution layer. It is the decision layer. Innovation stalls when an engineer builds in two days and it takes two weeks for a stakeholder to decide whether to pivot or persevere. Successful organizations push decision authority closer to the work while establishing clear guardrails at the top.

Evaluations that survive the model. Durable organizations build model-agnostic eval frameworks. When the underlying AI model changes, their measurement system survives. Everyone else starts their learning from scratch.

The Compounding Gap

What separates the winners from the fast-but-flat teams is the learning loop.

| Group A: The Winners | Group B: The Rest | |

|---|---|---|

| View of experiments | An investment in the next one | A standalone event |

| Data usage | Today's eval data informs tomorrow's spec | Data lives in unread reports |

| Knowledge | Accumulates like interest | Dies in a Slack thread |

| Baseline | Rises with every cycle | Starts from zero every time |

Teams report 15% plus velocity gains from adopting AI tools. That is a one-time lift that plateaus. The organizations genuinely compounding are not 15% faster. They are running experiments in days that used to take months. Not because they bought better tools. Because they redesigned how learning flows from one experiment to the next.

That is the difference between speed and velocity. Between moving fast and moving forward.

Three Shifts That Build Real Innovation Velocity

From output to learning. Stop tracking features shipped per quarter. Start tracking hypotheses validated and what changed as a result. If you shipped and did not learn something that changed the next experiment, that sprint was a failure. A practical starting point: for every experiment that ships, require a three-line post-mortem. What did we expect? What happened? What does the next experiment look like as a result?

From isolated experiments to portfolios. Do not plan the next move only after the current one ends. Run parallel experiment portfolios where the results of one bet explicitly refine the parameters of the next. This requires strategic coherence in experiment design that most roadmap processes do not support. It is worth rebuilding the process.

From velocity as a metric to velocity as a system property. Velocity is not something you do to a team. It is something that emerges from a system. The spec discipline, the eval frameworks, the decision architecture -- these are the system. The velocity is the output. Build the system. The velocity follows.

The Bottom Line

The AI era did not change the requirements of innovation. It simply lowered the cost of being wrong.

When experiments are cheap, the limiting factor shifts from execution to judgment. From how quickly you can build to how clearly you can think.

The product leaders winning are not the ones with the fastest teams. They are the ones whose teams get smarter with every experiment they run. Their baseline rises every cycle. Their competitors are still moving in circles.

If this resonates with your organization's current state

A 2-week AI Delivery Diagnostic is the fastest way to understand the gap and what to do about it.

Book a call directly - no pitch, no commitment.