84% of AI implementation failures are leadership-driven. Not technical. Not infrastructure. Not algorithms. Leadership.

RAND Corporation tracked over 2,400 enterprise AI initiatives through 2025 to reach that conclusion. The sample is large enough that you cannot explain it away. This is not a measurement artifact. It is the finding. And yet the conversation in most boardrooms is still about models, vendors, and tooling.

What the Data Actually Shows

In 2025, global enterprises invested $684 billion in AI. By year-end, over $547 billion had failed to deliver intended business value. The average sunk cost per abandoned initiative: $7.2 million.

The causes are not subtle. They are leadership decisions. 73% of failed projects lacked clear executive alignment on success metrics. 68% underinvested in data governance. 61% treated AI as an IT project rather than a business transformation. And 56% lost active C-suite sponsorship within six months.

Read that last number again. More than half of failed AI projects lost their executive sponsor before the work was done.

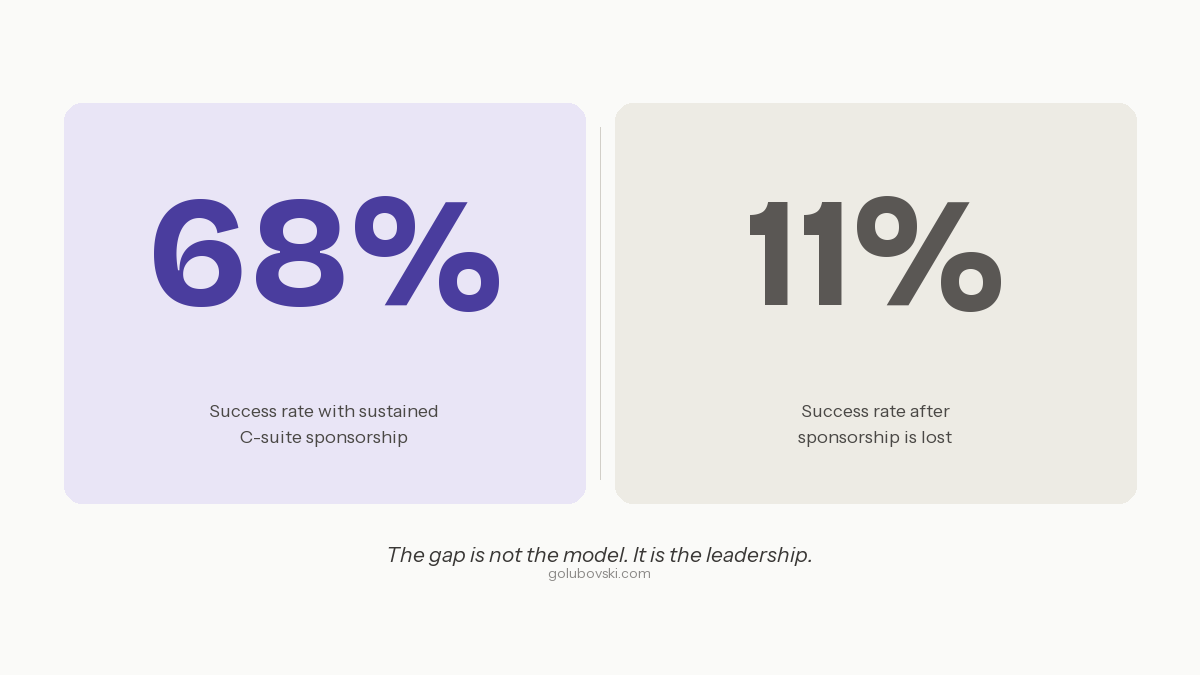

Projects with sustained CEO involvement achieve a 68% success rate. Projects that lose sponsorship: 11%. That gap is larger than any model improvement, any tooling upgrade, any infrastructure investment available today.

Why C-Suite Sponsorship Collapses

Projects with sustained C-suite sponsorship succeed at 68%. Projects that lose it: 11%. No model upgrade, vendor change, or infrastructure investment comes close to this differential.

Executives don't abandon AI because it fails. They abandon it because it gets uncomfortable.

The data isn't ready. The workflow wasn't redesigned. The team doesn't have the skills to evaluate outputs. Results don't materialize on the timeline the board was expecting. And then the next priority arrives — and the executive moves on.

This is not a failure of the AI. It is a failure of how the initiative was set up. Three patterns predict whether sponsorship holds or collapses.

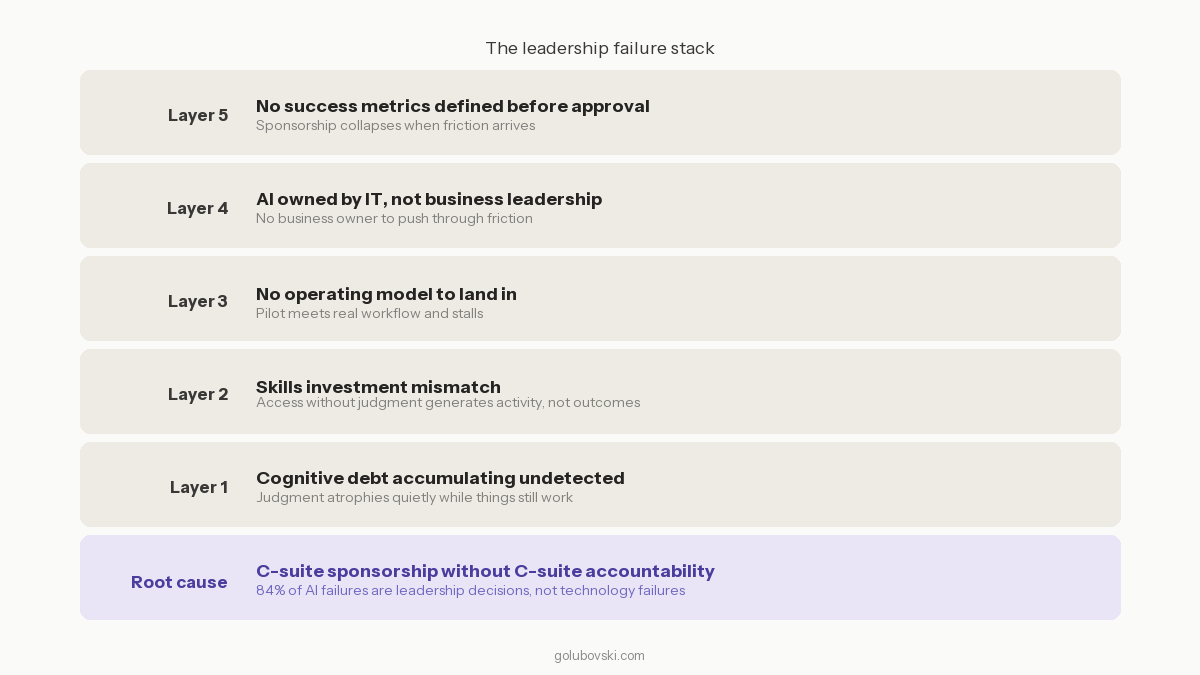

No success metrics defined before approval. When there is no agreed definition of what winning looks like, every setback becomes a reason to question the investment. The projects that hold sponsorship are the ones where the executive can point to a specific, measurable outcome. Ambiguity is what allows sponsorship to quietly disappear.

AI is owned by IT instead of product and business leadership. When AI transformation lives inside IT, it gets treated as a technology deployment. Business stakeholders don't feel ownership. When friction arrives, there is no business owner with skin in the game to push through it. The sponsor has no reason to fight for something they never really owned.

No operating model to land in. The pilot worked. Production didn't. The executive who championed the pilot cannot explain why. Nobody owns the actual problem, which is that the organization was never redesigned to operate around the AI it deployed. The sponsor, having no framework to diagnose the failure, concludes the investment was wrong and moves on.

The Skills Investment Mismatch

There is a second failure pattern sitting underneath the sponsorship problem. It is less visible and more expensive over time.

Organizations are investing heavily in AI tools and almost nothing in the human capability to use them well. The vast majority of AI investment goes into technology. A fraction goes into developing the judgment and operating discipline required to extract value from it.

This produces a predictable outcome. People have access to powerful tools they are not equipped to use strategically. They use AI for the tasks easiest to delegate — summarizing, drafting, searching — rather than the tasks where it compounds. The investment generates activity, not outcomes.

AI competency is a checklist: prompting tools, summarizing documents, running analyses. These skills matter, but they are table stakes. AI literacy is different. People who are AI literate use the technology to take their own thinking further, not to replace it. Organizations that cannot tell the difference between the two are buying the checklist and calling it a strategy.

Cognitive Debt: The Cost Nobody Is Tracking

There is a third failure pattern that the data is only beginning to capture. It will become the most consequential one over the next three to five years.

Cognitive debt is what accumulates when organizations delegate high-judgment tasks to AI without building the human capability to direct, evaluate, and override those decisions.

You don't notice cognitive debt while things are working. You only notice it when you need judgment, and it's gone.

Every organization deploying AI without a deliberate capability-building strategy is accumulating it. People accept outputs they haven't critically evaluated. The organizational muscle for certain kinds of judgment atrophies quietly. And when a complex, novel problem arrives — the kind that requires real human judgment — the organization discovers it has been slowly delegating the very capability it needs.

This is a leadership decision. Not a technology one. And the organizations building AI literacy, not just AI access, are the ones that will compound. The ones building only access are financing a debt they will eventually have to pay.

What the C-Suite Actually Needs to Do

If you can't define success before funding the initiative, don't fund it.

That is the single most important sentence in this section. Not because it sounds tough, but because 73% of failed AI projects lacked that definition. The absence of it is not an oversight. It is an invitation for the initiative to fail quietly while everyone finds someone else to blame.

Beyond that, three decisions separate the organizations generating returns from the ones generating the $7.2 million average sunk cost.

Own the operating model question, not just the technology question. The question is not which AI tools to buy. It is how the organization needs to change to get value from AI. That is a business transformation question. It belongs in the C-suite, not the IT department.

Invest in judgment, not just access. The skills investment mismatch is a strategic risk, not an HR problem. The organizations pulling ahead are building genuine AI literacy — teams that can specify, evaluate, govern, and improve AI systems over time. That capability does not come from tool subscriptions.

Stay in the room. Sponsorship is not a signature on a budget approval. It is showing up when the friction arrives. The 68% versus 11% success rate differential is the clearest finding in the data. Nothing else comes close to it. No model upgrade. No vendor change. No infrastructure investment. Sustained leadership presence is the variable that moves the outcome more than anything else.

Five failure patterns compound across most failed AI initiatives. Each layer compounds the one below it. The root cause is always leadership.

The Bottom Line

84% is not a technology failure rate. It is a leadership failure rate.

The money is being spent. The tools are being deployed. The pilots are being run. And too many executives are treating their role as approving the investment and waiting for the results.

AI doesn't fail in the model layer. It fails in the leadership layer. And that's the only place it can be fixed.

If this resonates with your organization's current state

A 2-week AI Delivery Diagnostic is the fastest way to understand the gap and what to do about it.

Book a call directly - no pitch, no commitment.